BTRFS is a damn good option too. I’m happy to hear how easy it is to use. I haven’t used it(yet), I went with ZFS because of its flexible architecture. On a desktop level, BTRFS makes sense, but in a server? What is it like in a Hypervisor?

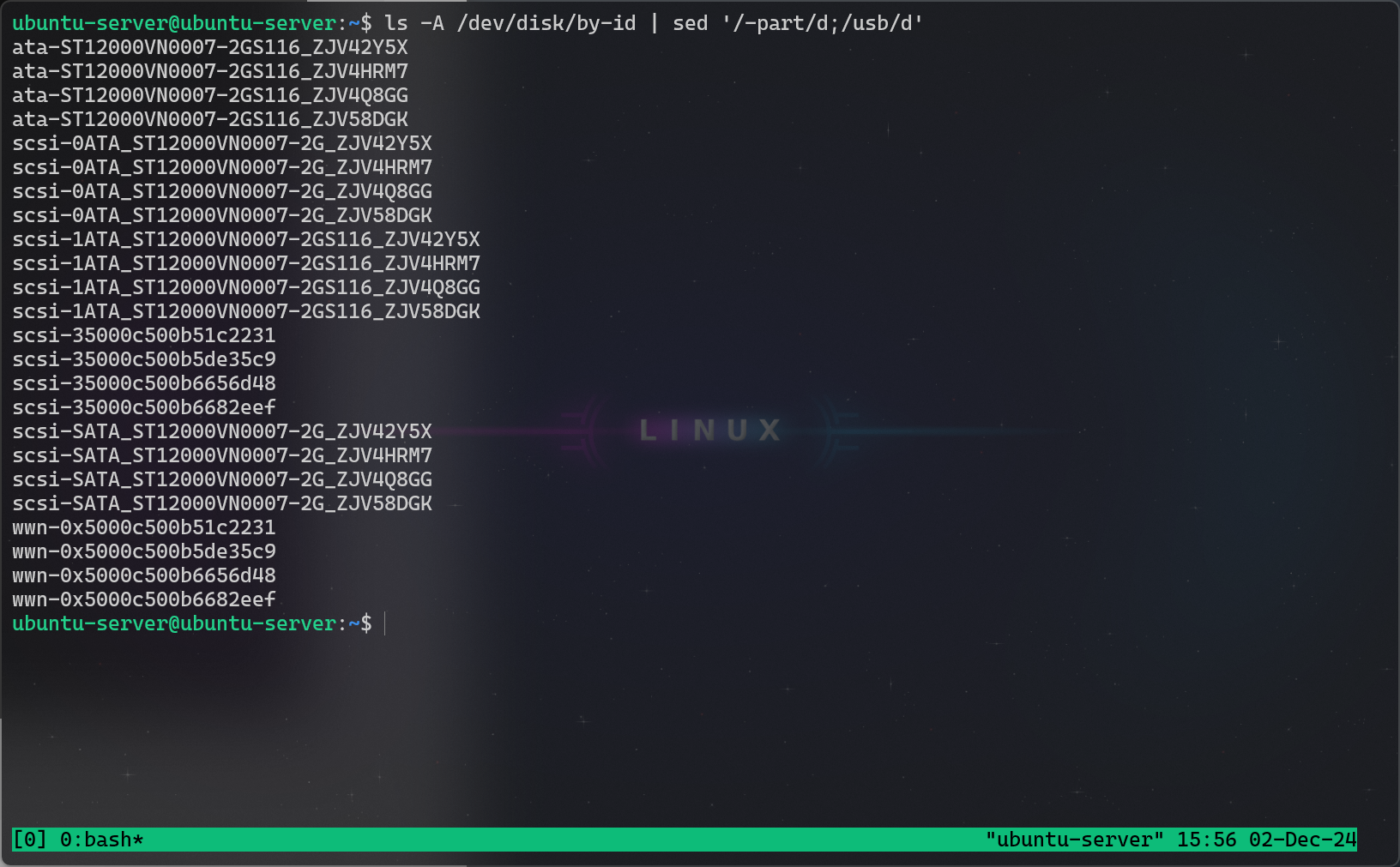

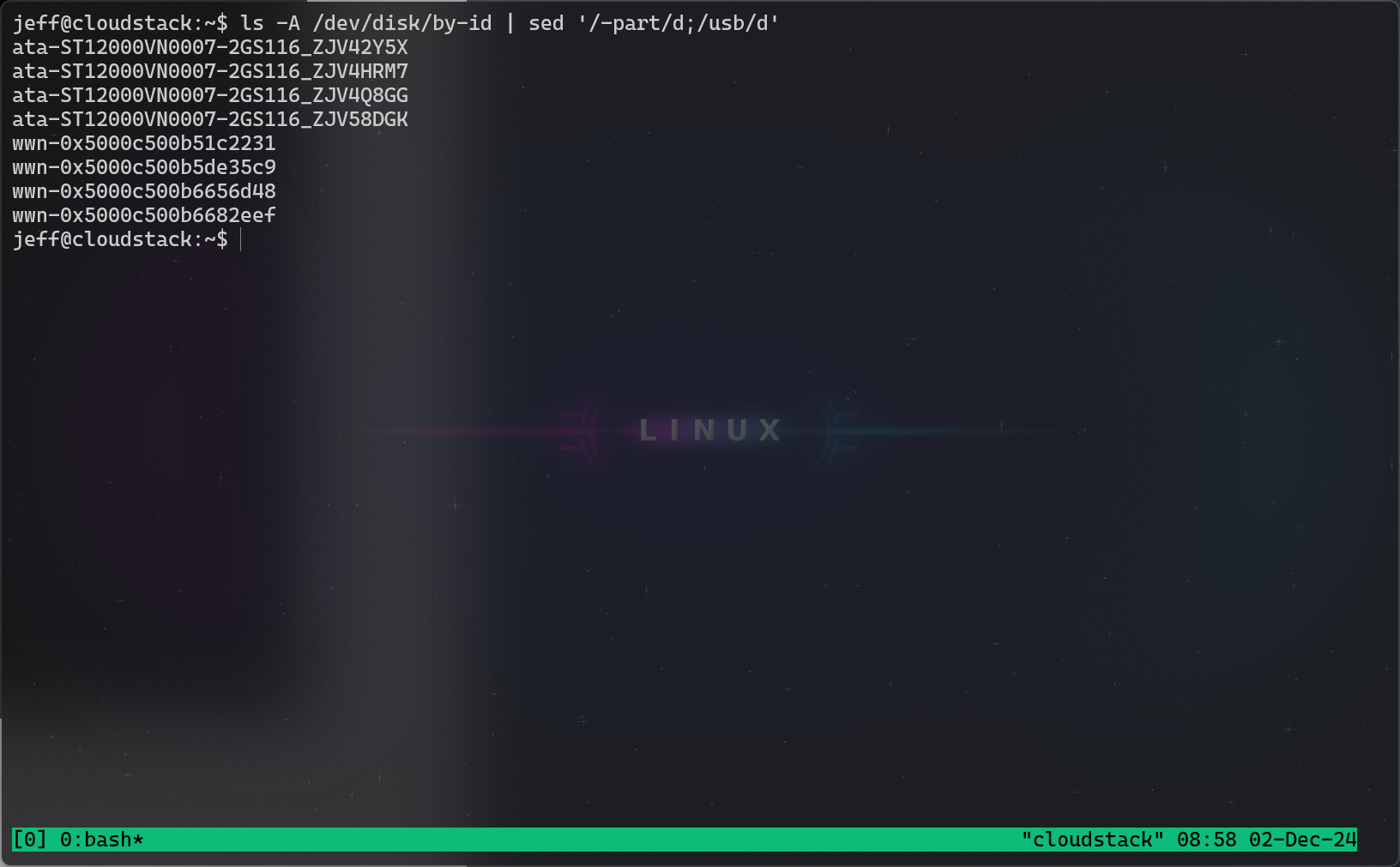

I’m working on standing up a Cloudstack host as a Hypervisor. Now, I want this host to be able to run 5 kubernetes VMs, so it needs to have quick access to the disks. Now, I do not have a RAID card, only an HBA. In such a scenario, I would typically use a RAID 10. But a ZFS Raid 10 outperforms an mdraid 10 anyways (in terms of writing, not necessarily reading). So that is what I’ve decided. It may not be a good idea, it may not even be feasible. But I’m heckin willing to give it a shot.

I’m actually jealous that you automatically have built in kernel support though. I am a little curious about BTRFS in terms of how(or if) it connects multiple disks, I’m simply uninformed.

Install Ubuntu 24.04 on ZFS RAID 10 - Github Repository

Edit: There are a few drawdowns to using ZFS, lousy docker performance being one that I’ve heard about. I’m curious how this will be affected if I have docker running inside a VM.

Thanks for the insight!

I may have to resort to using BTRFS for this host eventually if ZFS fails me. I do not expect a lot of duplication on a host, even if I have it, who cares I have 60 TB despite the raid 10 architecture. Having something with kernel support may be a better approach anyways.

It’s interesting to me that it struggles with raid 5 and 6 though. I would have expected that to be easy to provide.